I spoke to Appcelerator CEO Jeff Haynie yesterday, just before today’s announcement of the opening of an EMEA headquarters in Reading. It has only 4 or 5 staff at the moment, mostly sales and marketing, but will expand into professional services and training.

Appcelerator’s product is a cross-platform (though see below) development platform for both desktop and mobile applications. The mobile aspect makes this a hot market to be in, and the company says it has annual growth of several hundred percent. “We’re not profitable yet, but we’ve got about 1300 customers now,” Haynie told me. “ On the developer numbers side, we’ve got about 235,000 mobile developers and about 35,000 apps that have been built.”

In November 2011, Red Hat invested in Appcelerator and announced a partnership based on using Titanium with OpenShift, Red Hat’s cloud platform.

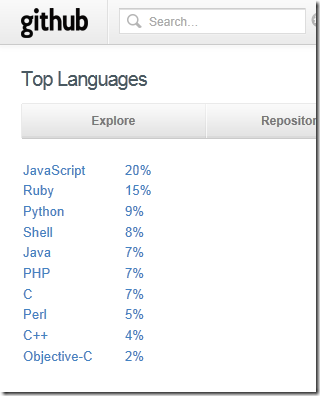

Another cross-platform mobile toolkit is PhoneGap, which has received lots of attention following the acquisition of Nitobi, the company which built PhoneGap, by Adobe, and also the donation of PhoneGap to the Apache Foundation. I asked Haynie to explain how Titanium’s approach differs from that of PhoneGap.

Technically what we do and what PhoneGap does is a lot different. PhoneGap is about how do you take HTML and wrap it into a web browser and put it into a native container and expose some of the basic APIs. Titanium is really about how you expose JavaScript for an API for native capabilities, and have you build a real native application or an HTML5 application. We offer both a true native application – I mean the UI is native and you get full access to all the API as if you had written it native, but you are writing it in JavaScript. We have also got now an HTML5 product where that same codebase can be deployed into an HTML5 web-driven interface. We think that is wildly different technically and delivers a much better application.

Haynie agrees that cross-platform tools can compromise performance and design, and even resists placing Titanium in the cross-platform category:

Titanium is a real native UI. When you’re in an iPhone TableView it’s actually a real native TableView, not an HTML5 table that happens to look like a TableView. You get the best of both worlds. You get a JavaScript-driven, web-driven API, but when you actually create the app you get a real app. Then we have an open extensible API so it’s really easy if you want to expose additional capabilities or bring in third-party libraries, very similar to what you do in Java with JNI [Java Native Invocation].

The category has got a bit of a bad rap. We wouldn’t really describe ourselves as cross-platform. We’re really an API that allows you target multiple different devices. It’s not a write-once run anywhere, it’s really API driven.

80% of our core APIs are meant to be portable. Filesystems, threads, things like that. Even some of the UI layer, basic views and buttons and things like that. But then you have a Titanium iOS namespace [for example] which allows you to access all the iOS-specific APIs, that aren’t portable.

I asked Haynie for his perspective on the mobile platform wars. Apple and Android dominate, but what about the others?

RIM and Microsoft are fighting for third place. I would go long on Microsoft. Look at Xbox, look at the impact of long-term endeavours, they have the sustainability and the investment power to play the long game, especially in the enterprise. We’ll see Microsoft make significant strides in Windows 8 and beyond.

Even within Android, there are going to be a lot of different types of Android that will be both complementary and competitive with Google. They will continue to take the lion’s share of the market. Apple will be a smaller but highly profitable and vertically integrated ecosystem. In my opinion Microsoft is a bit of bridge between both. They’re more open than Apple, and more vertically integrated than Google, with tighter standardisation and stacks.

I wouldn’t quite count RIM out. They still have a decent market share, especially in certain parts of the world and certain types of application. But they’ve got a long way to go with their new platform.

So will Titanium support Windows 8 “Metro” apps, running on the new WinRT runtime?

Yes, we don’t have a date or anything to announce, but yes.

I was also interested in his thoughts on Adobe, particularly as there is some flow of employees from Adobe to Appcelerator. Is he seeing migration of developers from Flex, Flash and AIR to Titanium?

Adobe has had a tremendously successful product in Flash, the web wouldn’t be the web today if it wasn’t for Flash, but the advent of HTML5 is encroaching on that. How do they move to the next big thing, I don’t know if they have a next big thing? And they’re dealing in an ecosystem that’s not necessarily level ground. That’s churning lots of dissenting and different opinions inside Adobe, is what we’re hearing.

We’re seeing a large degree of people that are Flash, ActionScript oriented that are migrating. We’ve hired a number of people from Adobe. Quite a lot of people in our QA group actually came out of the Adobe AIR group. Adobe is a fantastic company, the question is what’s their future and what’s their plan?

FInally, we discussed web standards. With a product that depends on web technology, does Appcelerator get involved in the HTML5 standards process? The question prompted an intriguing response with regard to WebKit, the open source browser engine.

We’re heavily involved in the Eclipse foundation, but not in the W3C today. I spent about 3 and half years on the W3C in my last company, so I’m familiar with the process and the people. The W3C process is largely driven – and I know the PhoneGap people have tried to get involved – by the WHAT working group and the HTML5 working group, which ultimately are driven by the browser manufacturers … it’s a largely vendor-oriented, fragmented space right now, that’s the challenge. We still haven’t managed to get a royalty-free, IPR-free codec for video.

I’d also say that one of the biggest factors pushing HTML5 is less the standardisation itself and more WebKit. WebKit has become the de facto [standard], which has really been driven by Apple and Google and against Microsoft. That’s driving HTML5 forward as much as the working group itself.