This morning’s Twitter feed informed me of the closure of codespaces.com, a company offering a repository and project management service to developers, using Git or subversion.

The reason was a malicious intrusion into its admin console for Amazon Web Services, which the company used as the back end for its services. The intruder demanded money, and when that was not forthcoming, deleted a large amount of data.

An unauthorised person who at this point who is still unknown (All we can say is that we have no reason to think its anyone who is or was employed with Code Spaces) had gained access to our Amazon EC2 control panel and had left a number of messages for us to contact them using a hotmail address

Reaching out to the address started a chain of events that revolved around the person trying to extort a large fee in order to resolve the DDOS.

Upon realisation that somebody had access to our control panel we started to investigate how access had been gained and what access that person had to data in our systems, it became clear that so far no machine access had been achieved due to the intruder not having our Private Keys.

At this point we took action to take control back of our panel by changing passwords, however the intruder had prepared for this and had a already created a number backup logins to the panel and upon seeing us make the attempted recovery of the account he locked us down to a non-admin user and proceeded to randomly delete artefacts from the panel. We finally managed to get our panel access back but not before he has removed all EBS snapshots, S3 buckets, all AMI’s, some EBS instances and several machine instances.

In summary, most of our data, backups, machine configurations and offsite backups were either partially or completely deleted.

According to the statement, the company is no longer viable and will cease trading. Some data has survived and customers are advised to contact support and recover what they can.

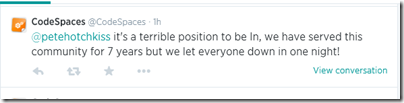

It is a horrible situation both for the company and its customers.

How can these kinds of risk be avoided? That is the question, and it is complex. Both backup and security are difficult.

Cloud providers such as Amazon offer excellent resilience and redundancy. That is, if a hard drive or a server fails, other copies are available and there should be no loss of data, or at worst, only a tiny amount.

Resilience is not backup though, and if you delete data, the systems will dutifully delete it on all your copies.

Backup is necessary in order to be able to go back in time. System administrators have all encountered users who demand recovery of documents they themselves deleted.

The piece that puzzles me about the CodeSpaces story is that the intruder deleted off-site backups. I presume therefore that these backups were online and accessible from the same admin console, a single point of failure.

As it happens, I attended Cloud World Forum yesterday in London and noticed a stand from Spanning, which offers cloud backup for Google Apps, Salesforce.com, and coming soon, Office 365. I remarked light-heartedly that surely the cloud never fails; and was told that yes, the cloud never fails, but you can still lose data from human error, sync errors or malicious intruders. Indeed.

Is there a glimmer of hope for CodeSpaces – is it possible that Amazon Web Services can go back in time and restore customer data that was mistakenly or maliciously deleted? I presume from the gloomy statement that it cannot (though I am asking Amazon); but if this is something the public cloud cannot provide, then some other strategy is needed to fill that gap.

Historical reference of straplines:

especially: “Real Time Backups. You make a change, we make a back up – it’s that simple”

That, I suspect, is the problem. What is described is resilience, not backup. With a daily backup that is stored offline, you might lose a day’s worth of data, but that should be the limit.

Tim

Yea well ya know…computers n stuff

As a (used to be) user of CodeSpaces my first reaction was DOH! Followed by… and this is where CodeSpaces went wrong

1) no offsite backups (as author has mentioned isn’t just a geographic matter). Just to be clear, in terms of the cloud offsite backup isn’t just a matter of geography… it is also a matter of ‘cloud’ separation / no single point of failure.

2) CodeSpaces probably (based on the wording on their site) tried to handle this internally, best approach unless you have expertise in this is to get in touch with a firm specializing in this

3) CodeSpaces didn’t plan out their recovery, the extortionist was sitting in wait, watching the AWS console activity. Once CodeSpaces realized the AWS console had been compromised they should have first locked down the AWS account (not just individual user accounts) by contacting Amazon. Once locked down measures like having Amazon snapshot content to separate secure location should be able to be arranged.

Well for us in terms of source control we lost version history but not the latest revision of work so in our case we’re weathered the storm without any noticeable loss.

It’s always especially troubling to learn about cases of data loss caused by malignant activity. In our experience at cloudfinder.com it’s not the most frequent cause; honest user and admin mistakes are far more common, and can be equally disastrous, but the intent to cause harm still sets a case like codespaces.com apart from others. It just sucks.

As a cloud to cloud backup company we’re obviously biased but I would still like to share 4 thoughts about our approach to securing cloud data that I hope can help anyone who’s upset about the CodeSpaces story and may be having second thoughts about the cloud:

1) Everything is the same, only different. The need for _second location_ backups never went away just because the everyone went cloud. Our standard answer to “who needs a _second location_ backup for a SaaS solution [like] Google Apps?” is “Everyone who isn’t insane”.

2) Very rarely is not the same as never. When critically analysing a potential SaaS, PaaS or IaaS setup, my advice is to bring out your risk matrix and map out what could happen, how likely/unlikely it seems to be, and think about the consequences. Will your company be out of business if a certain event happens, and is it possible it will occur once every 10 years?

3) Think about your disaster recovery backup strategy as you think about fire insurance. Do not get tricked into extrapolating 1 or even 5 damage free year(s) in perpetuity, any more than you would cancel your fire insurance policy after the same time, having determined that the office obviously isn’t prone to catching fire. It’s always too late to act when the data loss event has occurred. Until the moment CodeSpaces were attacked, the extrapolated risk of hacker attack based on historic experience, would most likely have been 0.

4) No, it’s not by definition a flaw in the offering of a provider like AWS that data could be deleted, by mistake or intentionally. The traditional approach to backup has been to keep the backup physically separated from the source data. In my opinion, the same is even more true for the cloud. Let’s say CodeSpaces could have foreseen this attack would happen, and had decided to take measures to protect themselves. They’d probably have started doing daily incremental or full backups. Where to store these? in AWS, or elsewhere? Back to the risk matrix; any critical (black swan) type event that would stop CodeSpaces from accessing AWS at all, would render the backups as useless as the original data. So, if CodeSpaces could find a _second location_ backup option for their data, not only would AWS be freed from the responsibility for both the original data and the backups, but CodeSpaces would also build added resilience into their disaster recovery planning, and also have taken an important step away from cloud vendor lock-in.