During my recent visit to Beijing I went along to the Hong Qiao market. It was quite an experience, with lots of fun gadgets on display, mostly fake but with plenty of good deals to be had.

I did not buy much but could not resist an iPad Bluetooth keyboard. I have been meaning to try one of these for a while. The one I picked is integrated into a “leather” case.

The packaging is well future-proofed:

Of course I had to haggle over the price, and we eventually settled on ¥150, about £15.00 or $24.00.

It comes with a smart 12-page manual, which you will enjoy if you like slightly mangled English, though there are some small differences between the product and the manual. A power LED is described in the manual but seems not to exist. The manual makes a couple of references to Windows and in fact the keyboard does also work with Windows, but there is nothing silly like a Windows key and this really is designed for the iPad.

No manufacturer is named, which is odd as the vendor insisted that it is “original”, though the box does say “Made in China”.

The design is straightforward. The iPad slots in to what becomes the top flap of the case. Open the case, and you can set the iPad into an upright position for typing. The lower flap of the case has a magnetic clasp, which works fine. It is a bit of a nuisance though as it gets a little in the way when you are in typing mode. You cannot fold it back to tuck it out of the way.

I noticed a few blemishes in the case; possibly I had a second-grade example.

But I have not found any technical problems.

The unit is supplied with a micro USB cable for charging. It did not take long to charge and I think was already half-charged when I purchased.

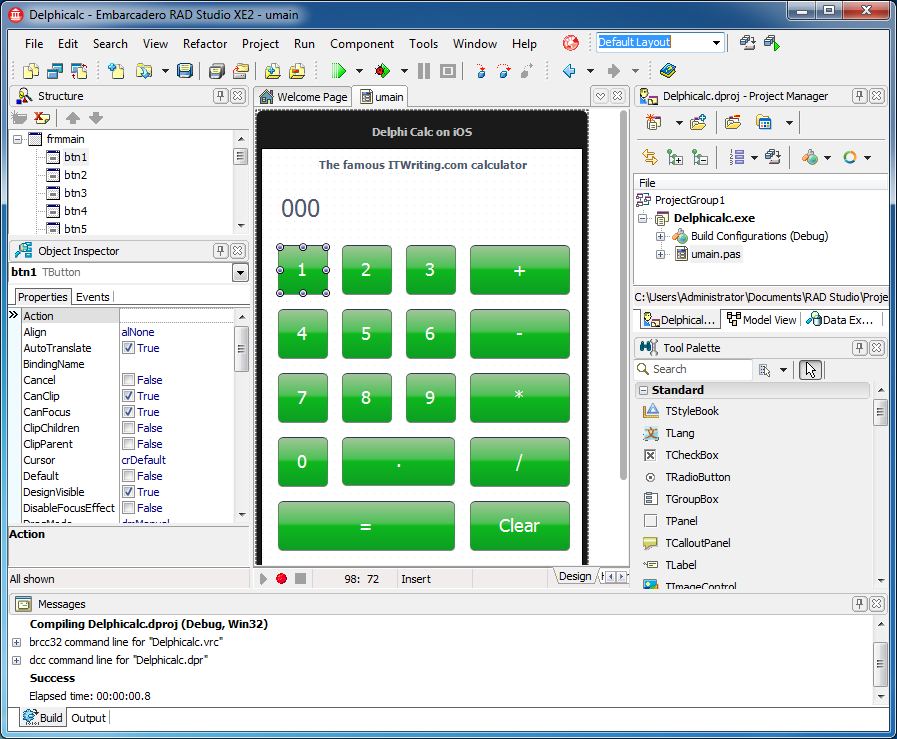

Here is a closer look at the keyboard itself.

Once charged, you turn on the power and pair it to your iPad by pressing the Connect button. I had a little difficulty with this until I discovered that you must press down until you feel a distinct click, then it goes into pairing mode. If you then go into the iPad’s Bluetooth settings you will see the keyboard as an available device. Connect, and you are prompted with a code. Type this code on the keyboard to complete the pairing.

The power switch on the keyboard is impossibly small and fiddly to use. If you know how small is a standard micro USB socket you will get the scale in this picture:

So can you just leave the keyboard on? The keyboard claims a standby time of 100 days, so maybe that will be OK, though the manual warns:

When you are finished using your keyboard or you will be required after the keyboard to carry, so don’t forget to set aside the keyboard to switch the source OFF, turn off the keyboard’s power to extend battery life.

Note: When you normally using the keyboard, or if you are not using the keyboard and didn’t turn off the power switch, please don’t fold or curly, so you will have been working at the keyboard, it will greatly decrease you using the keyboard.

I think this means that turning it off is recommended.

Now the big question: how is it in use? It is actually pretty good. I can achieve much faster text input with the keyboard than using the on-screen option, and it is great to see your document without a virtual keyboard obscuring half the screen.

The keyboard is the squishy type and claims to be waterproof. In fact:

It is waterproof, dustproof, anti-pollution, anti-acid, waterproof for silicone part

according to the manual, as well as having:

Silence design, it will not affect other people’s rest.

which is good to know.

The keyboard has a US layout, but shift-3 gets me a £ sign and alt-2 a € symbol so I am well covered.

There are a number of handy shortcut keys along the top which cover brightness, on-screen keyboard display, search, iTunes control, and a few other functions. There is a globe key that I have not figured out; it looks as if it should open Safari but it does not. There are also Fn, Control, Alt and Command keys, cursor keys, and Shift keys at left and right. Most of the keyboard shortcuts I have seen listed for iPad keyboards in general seem to work here as well.

Learning keyboard shortcuts is one of things you need to do in order to get the best from this. For example, press alt+e and then any vowel to get an acute accent, press alt+backtick and then any vowel to get a grave accent, and so on. Finding the right shortcuts is a bit of an adventure and I have more to discover. Not everything is covered; I have not found any way to apply bold from the keyboard in Pages, for example. I would also love to find an equivalent to alt-tab on Windows, which switches through running apps. There is a Home key which you can double-tap, but then you have to tap the screen to select an app (unless you know better).

I am pleased with the keyboard, though given the defects in the case and irritations like the tiny power switch it is not really a huge bargain. I find it thought-provoking though. Is iPad + keyboard all I need when on the road, or have I just recreated an inferior netbook? The size and weight is not much different.

Unlike some, I do still see value in the netbook, which has a better keyboard, a battery life that is nearly as good (at least it was when new), handy features like USB, ethernet and VGA ports, and the ability to run Microsoft Office and other Windows apps.

I am also finding that while I like the iPad keyboard for typing, the integrated case has a downside. If you just want to use the iPad as a tablet, the keyboard gets in the way. Maybe a freestanding Bluetooth keyboard is better, like the official Apple item, though that means another item kicking around in your bag.

In the end, the concept needs a little more design work. Having a keyboard in the case is a good idea, but it needs to be so slim that it does not bulk up the package much and gets out of the way when not needed. Perhaps some sort of fabric keyboard is the answer.

Incidentally, if you hanker after one of these but cannot get to the Hong Qiao market, try eBay or Amazon for a number of keyboard cases that look similar to me. Look carefully though; I noticed one by “LuvMac” which lacks a right Shift key, causing some complaints. Mine does have a right Shift key; perhaps it is a later revision.

Hmm, I have just realised that the lady on the stall forgot to give me a receipt or warranty …