Here are two things we learn from Jensen Harris’s post of 18 May.

First, Microsoft cares more about WinRT and Metro, the new tablet-oriented user interface in Windows 8, than about the desktop. In the section entitled Goals of the Windows 8 user experience, Harris refers almost exclusively to WinRT apps. Further, he asks the question: what is the role of desktop in Windows 8?

It is pretty straightforward. The desktop is there to run the millions of existing, powerful, familiar Windows programs that are designed for mouse and keyboard. Office. Visual Studio. Adobe Photoshop. AutoCAD. Lightroom. This software is widely-used, feature-rich, and powers the bulk of the work people do on the PC today.

Does that mean the desktop is for legacy, like XP Mode in Windows 7? Harris denies it:

We do not view the desktop as a mode, legacy or otherwise—it is simply a paradigm for working that suits some people and specific apps.

He adds though that “We think in a short time everyone will mix and match” desktop and Metro apps – though he does not call them Metro apps, he calls them “new Windows 8 apps.”

Second, Microsoft considers that the poor reaction to the Consumer Preview can be fixed by tweaking the detail rather than by changing the substance of how Windows 8 is designed.

But fundamentally, we believe in people and their ability to adapt and move forward. Throughout the history of computing, people have again and again adapted to new paradigms and interaction methods—even just when switching between different websites and apps and phones. We will help people get off on the right foot, and we have confidence that people will quickly find the new paradigms to be second-nature.

In fact, this post is peppered with references to negative reactions for previous versions of Windows. Microsoft is presuming that this is normal and that history will repeat:

Although some people had critical reactions and demanded changes to the user interface, Windows 7 quickly became the most-used OS in the world.

This is revisionist, as I am sure Harris and his team are aware. The reaction to Windows 7 was mainly positive, from the earliest preview on. It was better than Windows Vista; it was better than Windows XP.

Windows Vista on the other hand had a troubled launch and was widely disliked. User Account Control and its constant approval prompts was part of the problem, but more serious was that OEMs released Vista machines with underpowered hardware further slowed down by foistware and in many cases it Vista worked badly out of the box. You could get Vista working nicely with sufficient effort, but many just stayed with Windows XP.

The failure of Vista was damaging to Microsoft, but mitigated in that most users simply skipped a version and waited for Windows 7. The situation now is more serious for Microsoft, both because of the continuing popularity of the Mac and the rise of tablets, especially Apple’s iPad.

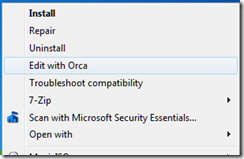

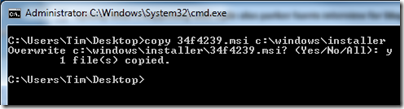

It is precisely because of that threat that Microsoft is making such a big bet on Metro and WinRT. The reasoning is that while shipping a build of Windows that improves on 7 would please the Microsoft platform community, it would be ineffective in countering the iPad. It would also fail to address problems inherent in Windows: lack of isolation between applications, and between applications and the operating system; the complexity of application installs and the difficulty of troubleshooting them when they go wrong; and the unsuitability of Windows for touch control.

There is also a hint in this most recent post that classic Windows uses too much power:

Once we understood how important great battery life was, certain aspects of the new experience became clear. For instance, it became obvious early on in the planning process that to truly reimagine the Windows experience we would need to reimagine apps as well. Thus, WinRT and a new kind of app were born.

Another key point: Microsoft’s partnership with hardware manufacturers has become a problem, since they damage the user experience with trialware and low quality utilities. The Metro-style side of Windows 8 fixes that by offering a locked-down environment. This will be most fully realised in Windows RT, Windows on ARM, which only allows WinRT apps to be installed.

Microsoft decided that only a new generation of Windows, a “reimagining”, would be able to compete in the era of BYOD (Bring Your Own Device).

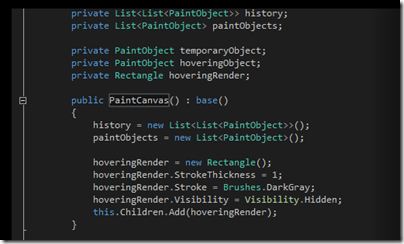

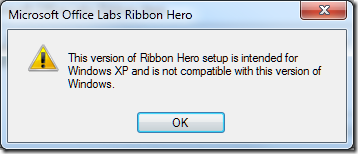

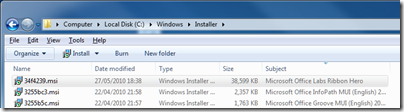

One thing is for sure: the Windows team under Steven Sinofsky does not lack courage. They have form too. Many of the key players worked on the Office 2007 Ribbon UI, which was also controversial at the time, since it removed the familiar drop-down menus that had been in every previous version of Office. They stuck by their decision, and refused to add an option to restore the menus, thereby forcing users to use the ribbon even if they disliked it. That strategy was mostly successful. Users got used to the ribbon, and there was no mass refusal to upgrade from Office 2003, nor a substantial migration to OpenOffice which still has drop-down menus.

I have an open mind about Windows 8. I see the reasoning behind it, and agree that it works better on a real tablet than on a traditional PC or laptop, or worst of all, a virtual machine. Harris says:

The full picture of the Windows 8 experience will only emerge when new hardware from our partners becomes available, and when the Store opens up for all developers to start submitting their new apps.

Agreed; but it also seems that Windows 8 will ship with a number of annoyances which at the moment Microsoft looks unlikely to fix. These are mainly in the integration, or lack of it, between the Metro-style UI and the desktop. I can live without the Start menu, but will miss the taskbar with its guide to running applications and its preview thumbnails; these remain in the desktop but do not include Metro apps. Having only full-screen apps can be irritation, and I wonder if the commitment to the single-app “immersive UI” has been taken too far. When working in Windows 8 I miss the little clock that sits in the notification area; you have to swipe to see the equivalent and the fast and fluid UI is making me work harder than before.

I believe Microsoft will listen to complaints like these, but probably not until Windows 9. I also believe that by the time Windows 9 comes around the computing landscape will look very different; and the reception won by Windows 8 will be a significant factor in how it is shaped.