Microsoft has announced a new OneDrive API for programmatic access to its cloud storage service. It is a REST API which Microsoft Program Manager Ryan Gregg says the company is also using internally for OneDrive apps. The new API replaces the previous Live SDK, though the Live SDK will continue to be supported. One advantage of the new API is that you can retrieve changes to files and folders in order to keep an offline copy in sync, or to upload changes made offline.

Unfortunately this does not extend to only downloading the changed part of a file (as far as I can tell); you still have to delete and replace the entire file. Imagine you had a music file in which only the metadata had changed. With the OneDrive API, you will have to upload or download the entire file, rather than simply applying the difference. However, you can upload files in segments in order to handle large files, up to 10GB.

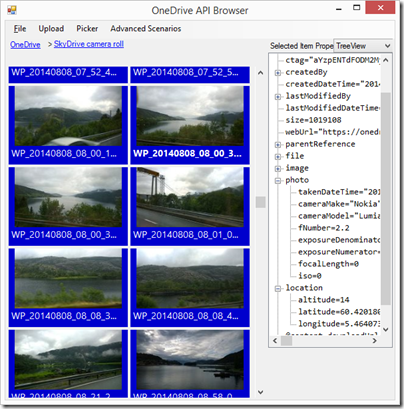

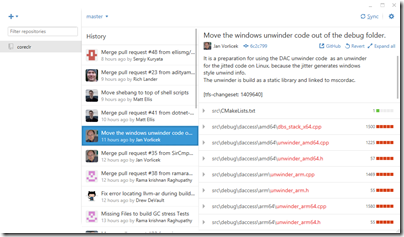

I have worked with file upload and download using the Azure Blob Storage service so I was interested to see what is now on offer for OneDrive. I went along to the OneDrive API site on GitHub and downloaded the Windows/C# API explorer, which is a Windows Forms application (why not WPF?). This uses a OneDrive SDK library which has been coded as a portable class library, for use in desktop, Windows 8, Windows Phone 8.1 and Windows Phone Silverlight 8.

I have to say this is not the kind of sample I like. I prefer short snippets of code that demonstrate things like: here is how you authenticate, here is how you iterate through all the files in a folder, here is how you download a file, here is how you upload a file, and so on. All these features are there in this app, but finding them means weaving your way through all the UI code and async calls to piece together how it actually works. On top of that, despite all those async calls, there are some performance issues which seem to be related to the smart tiles which display a preview image, where possible, from each file and folder. I found the UI becoming unresponsive at times, for example when retrieving my large SkyDrive camera roll.

Gregg makes no reference in his post to OneDrive for Business, but my assumption is that the new API only applies to consumer OneDrive. Microsoft has said though that it intends to unify its two OneDrive services so maybe a future version will be able to target both.

At a quick glance the API looks different to the Azure Blob Storage API. They are different services but with some overlap in terms of features and I wonder if Microsoft has ever got all its cloud storage teams together to work out a common approach to their respective APIs.

I do not intend to be negative. OneDrive is an impressive and mostly free service and the API is important for lots of reasons. If you find the OneDrive integration in the current Windows 10 preview too limited (as I do), at least you now have the ability to code your own alternative.