The Register reports that Google now runs all its cloud apps in Docker-like containers; this is in line with what I heard at the QCon developer event earlier this year, where Docker was the hot topic. What caught my eye though was Trevor Pott’s comment comparing, not Hyper-V to VMWare, but System Center Virtual Machine Manager to VMWare’s management tools:

With VMware, I can go from "nothing at all" to "fully managed cluster with everything needed for a five nines private cloud setup" in well under an hour. With SCVMM it will take me over a week to get all the bugs knocked out, because even after you get the basics set up, there are an infinite number of stupid little nerd knobs and settings that need to be twiddled to make the goddamned thing actually usable.

VMWare guy struggling to learn a different way of doing things? There might be a little of that; but Pott makes a fair point (in another comment) about the difficulty, with Hyper-V, of isolating the hypervisor platform from the virtual machines it is hosting. For example, if your Hyper-V hosts are domain-joined, and your Active Directory (AD) servers are virtualised, and something goes wrong with AD, then you could have difficulty logging in to fix it. Pott is talking about a 15,000 node datacenter, but I have dealt with this problem at a micro level; setting up Windows to manage a non-domain joined host from a domain-joined client is challenging, even with the help of the scripts written by an enterprising Program Manager at Microsoft. Of course your enterprise AD setup should be so resilient that this cannot happen, but it is an awkward dependency.

Writing about enterprise computing is a challenge for journalists because of the difficulty of getting hands-on experience or objective insight from practitioners; vendors of course are only too willing to show off their stuff but inevitably they paint with a broad brush and with obvious self-interest. Much of IT is about the nitty-gritty. I do a little work with small businesses partly to get some kind of real-world perspective. Even the little I do is educational.

For example, recently I renewed the certificate used by a Microsoft Dynamics CRM installation. Renewing and installing the certificate was easy; but I neglected to set permissions on the private key so that the CRM service could access it, so it did not work. There was a similar step needed on the ADFS server (because this is an internet-facing deployment); it is not an intuitive process because the errors which surface in the event viewer often do not pinpoint the actual problem, but rather are a symptom of the problem. It does not help that the CRM Email Router, when things go wrong, logs an identical error event every few seconds, drowning out any other events.

In other words, I have shared some of the pain of sysadmins and know what Pott means by “stupid little nerd knobs”.

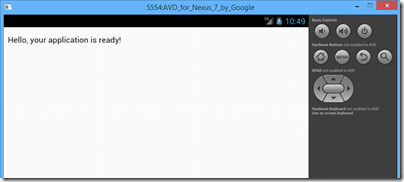

Getting back to the point, I have actually installed System Center including Virtual Machine Manager in my own lab, and it was challenging. System Center is actually a suite of products developed at different times and sometimes originating from different companies (Orchestrator, for example), and this shows in lack of consistency in the user interface, and in occasional confusing overlap in functionality.

I have a high regard for Hyper-V itself, having found it a solid and fast performer in my own use and an enormous advance over working with physical servers. The free management tool that you can install on Windows 7 or 8 is also rather good. The free Hyper-V server you can download from Microsoft is one of the best bargains in IT. Feature-wise, Hyper-V has improved rapidly with each new release and it seems to me a strong offering.

We have also seen from Microsoft’s own Azure cloud platform, which uses Hyper-V for virtualisation, that it is possible to automate provisioning and running Hyper-V at huge scale, controlled by easy to use management tools, either browser-based or using PowerShell scripts.

Talk private cloud though, and you are back with System Center with all its challenges and complexity.

Well, now you have the option of Azure Pack, which brings some of Azure’s technology (including its user-friendly portal) to enterprise or hosting provider datacenters. Microsoft needed to harmonise System Center with Azure; and the fact that it is replacing parts of System Center with what has been developed for Azure suggests recognition that it is much better; though no doubt installing and configuring Azure Pack also has challenges.

My last reflection on the above is that ease of use matters in enterprise IT just as it does in the consumer world. Yes, the users are specialists and willing to accept a certain amount of complexity; but if you have reliable tools with clearly documented steps and which help you to do things right, then there are fewer errors and greater productivity.