I have been working with a small business migrating its email to Office 365. The task seems simple enough: migrate just over 100 mailboxes from on-premise Exchange 2007. There is no requirement for a hybrid deployment, so the normal approach is a cutover migration. You run a script on Exchange at the Office 365 end which sucks up all the mailboxes, creating user accounts as it goes. Once the mailboxes are synched, the script (called a migration batch) synchronises the mailboxes every 24 hours. You then change the MX (DNS) records so that mail goes to Office 365, get users to log on to the new mailboxes, and decommission the on-premise Exchange.

It sounds straightforward, and I am sure works fine with small mailboxes and just a few of them. It is meant to work for up to 1000 mailboxes though, so I did not think just 100 would cause any problems.

Here is what I discovered.

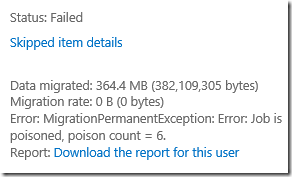

First, we soon ran into problems. The migration batch seems remarkably slow, partly thanks to using an ADSL connection (fast download but slow upload) but even slower than that would suggest. Some mailboxes report “Failed” for a variety of reasons, the most common being that they simply stop synching for no apparent reason, or in some cases never start synching. Here are some of the error messages:

- Error: MigrationPermanentException: Error: Job is poisoned, poison count = 6.

- Error: SyncTimeoutException: Email migration failed for this user because no email could be downloaded for 5 hours, 0 minutes.

- Error: MigrationPermanentException: Error: The job has not made progress since 28/07/2013 08:43:19. Job was picked up at 27/07/2013 17:11:15.

So what do you do if you get these errors?

My first observation is that documentation for cutover migration is thin. It makes little provision for anything going wrong. There are a few things (like avoiding messages over 25MB or hidden addresses in Exchange) but nothing about “job is poisoned”.

Another observation is that the Cutover Migration Batch is lacking in common-sense options. For example, with a slow-ish connection you might prefer to migrate only two or three mailboxes at a time, rather than then 16, which seems to be the fixed number.

I was also puzzled by the option to “Stop” a migration batch, which you can do using a toolbar button.

What are the consequences of stopping a batch? Can you stop it in the morning, and restart in the evening, to reduce bandwidth during the working day, for example? Or do bad things happen?

I headed for the support community. Unfortunately this is not too good either. There are a number of unfailingly polite Microsoft support people there, but they don’t seem all that well informed when it comes to the details of what can go wrong and there is a lot of reference to support articles that might or might not answer the question; or an initial response that doesn’t quite answer the question and then no satisfactory follow-up; or retreat to private messages which, judging from the public responses, are also not always helpful either.

After being told that it was OK to stop and restart a migration batch I tried it. It did not work well at all for me. I even got this lovely error message for a Room mailbox:

Error: ProvisioningFailedException: Couldn’t convert the mailbox because the mailbox "mailboxname" is already of the type "Room”

I had better success deleting the migration batch and creating a new one, which is easy because it remembers the connection settings from last time. Mailboxes in progress resumed where they left off, and even some failed mailboxes started synching again.

Still, the detail of this is not so important. Fundamentally, Office 365 seems to me a strong service at a reasonable price (though I like Exchange better than SharePoint), and Microsoft is pushing small businesses towards it so hard that it is becoming difficult to stay on Microsoft’s platform at all unless you migrate – the disappearance of Small Business Server with bundled Exchange makes a small deployment expensive.

This being the case, you would have thought Microsoft would put its best effort into the migration tools, which are critical not only for successful transition, but also as many people’s first impression of the service. I did not expect what look like immature tools with skimpy documentation and poor community support.

Is Microsoft struggling to scale the system quickly enough to meet demand?

In some ways this may actually be good for Microsoft partners who still have their traditional role of puzzling out these kinds of problems and making it easier for their customers.