I spoke to Dr Steve Scott, NVIDIA’s CTO for Tesla, at the end of the GPU Technology Conference which has just finished here in Beijing. In the closing session, Scott talked about the future of NVIDIA’s GPU computing chips. NVIDIA releases a new generation of graphics chips every two years:

- 2008 Tesla

- 2010 Fermi

- 2012 Kepler

- 2014 Maxwell

Yes, it is confusing that the Tesla brand, meaning cards for GPU computing, has persisted even though the Tesla family is now obsolete.

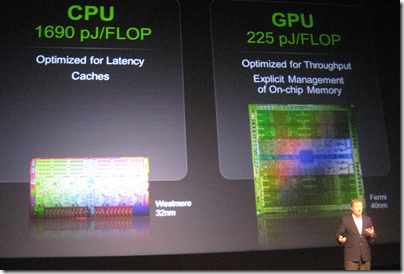

Dr Steve Scott showing off the power efficiency of GPU computing

Scott talked a little about a topic that interests me: the convergence or integration of the GPU and the CPU. The background here is that while the GPU is fast and efficient for parallel number-crunching, it is of course still necessary to have a CPU, and there is a price to pay for the communication between the two. The GPU and the CPU each have their own memory, so data must be copied back and forth, which is an expensive operation.

One solution is for GPU and CPU to share memory, so that a single pointer is valid on both. I asked CEO Jen-Hsun Huang about this and he did not give much hope for this:

We think that today it is far better to have a wonderful CPU with its own dedicated cache and dedicated memory, and a dedicated GPU with a very fast frame buffer, very fast local memory, that combination is a pretty good model, and then we’ll work towards making the programmer’s view and the programmer’s perspective easier and easier.

Scott on the other hand was more forthcoming about future plans. Kepler, which is expected in the first half of 2012, will bring some changes to the CUDA architecture which will “broaden the applicability of GPU programming, tighten the integration of the CPU and GPU, and enhance programmability,” to quote Scott’s slides. This integration will include some limited sharing of memory between GPU and CPU, he said.

What caught my interest though was when he remarked that at some future date NVIDIA will probably build CPU functionality into the GPU. The form that might take, he said, is that the GPU will have a couple of cores that do the CPU functions. This will likely be an implementation of the ARM CPU.

Note that this is not promised for Kepler nor even for Maxwell but was thrown out as a general statement of direction.

There are a couple of further implications. One is that NVIDIA plans to reduce its dependence on Intel. ARM is a better partner, Scott told me, because its designs can be licensed by anyone. It is not surprising then that Intel’s multi-core evangelist James Reinders was dismissive when I asked him about NVIDIA’s claim that the GPU is far more power-efficient than the CPU. Reinders says that the forthcoming MIC (Many Integrated Core) processors codenamed Knights Corner are a better solution, referring to the:

… substantial advantages that the Intel MIC architecture has over GPGPU solutions that will allow it to have the power efficiency we all want for highly parallel workloads, but able to run an enormous volume of code that will never run on GPGPUs (and every algorithm that can run on GPGPUs will certainly be able to run on a MIC co-processor).

In other words, Intel foresees a future without the need for NVIDIA, at least in terms of general-purpose GPU programming, just as NVIDIA foresees a future without the need for Intel.

Incidentally, Scott told me that he left Cray for NVIDIA because of his belief in the superior power efficiency of GPUs. He also described how the Titan supercomputer operated by the Oak Ridge National Laboratory in the USA will be upgraded from its current CPU-only design to incorporate thousands of NVIDIA GPUs, with the intention of achieving twice the speed of Japan’s K computer, currently the world’s fastest.

This whole debate also has implications for Microsoft and Windows. Huang says he is looking forward to Windows on ARM, which makes sense given NVIDIA’s future plans. That said, the I get impression from Microsoft is that Windows on ARM is not intended to be the same as Windows on x86 save for the change of processor. My impression is that Windows on ARM is Microsoft’s iOS, a locked-down operating system that will be safer for users and more profitable for Microsoft as app sales are channelled through its store. That is all very well, but suggests that we will still need x86 Windows if only to retain open access to the operating system.

Another interesting question is what will happen to Microsoft Office on ARM. It may be that x86 Windows will still be required for the full features of Office.

This means we cannot assume that Windows on ARM will be an instant hit; much is uncertain.

Always intrigued to get examples of what people believe win32 enables that WinRT doesn’t, specifically…

If GPU and CPU is merged, wouldn’t that give less choice for advanced users (read geeks) when it comes to configuring the system for specific use… gaming for example?