Gartner has recently delivered a report called Why Microsoft Teams will soon be just as common as Outlook, which gave me pause for reflection.

The initial success of Office 365 was almost all to do with email. Hosted Exchange at a reasonable cost is a an obvious win for businesses who were formerly on on-premises Exchange or Small Business Server. Microsoft worked to make the migration relatively seamless, and with strong Active Directory support it can be done with users hardly noticing. Exchange of course is more than just email, also handling calendars and tasks, and Outlook and Exchange are indispensable tools for many businesses.

The other pieces of Office 365, such as SharePoint, OneDrive and Skype for Business (formerly Lync) took longer to gain traction, in part because of flaws in the products. Exchange has always been an excellent email server, but in cloud document storage and collaboration Microsoft’s solution was less good than alternatives like DropBox and Box, and ties to desktop Office are a mixed blessing, welcome because Office is familiar and capable, but also causing friction thanks to the need for old-style software installations.

Microsoft needed to up its game in areas beyond email, and to its credit it has done so. SharePoint and OneDrive are much improved. In addition, the company has introduced a range of additional applications, including StaffHub for managing staff schedules, Planner for project planning and task assignment, and PowerApps for creating custom applications without writing code.

We have also seen a boost to the cloud-based Dynamics suite thanks to synergy between this and Office 365.

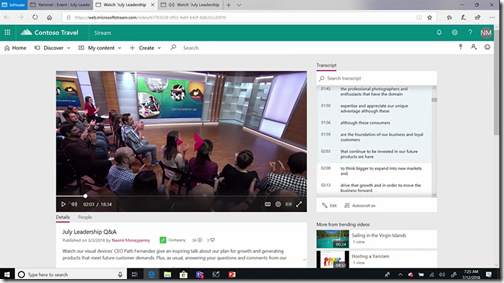

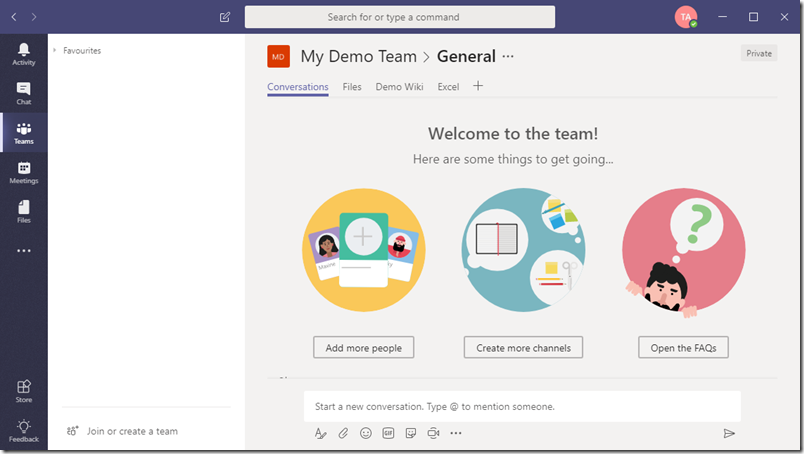

Having lots of features is one thing, winning adoption is another. Microsoft lacked a unifying piece that would integrate these various elements into a form that users could easily embrace. Teams is that piece. Introduced in March 2017, I initially thought there was nothing much to it: just a new user interface for existing features like SharePoint sites and Office 365/Exchange groups, with yet another business messaging service alongside Skype for Business and Yammer.

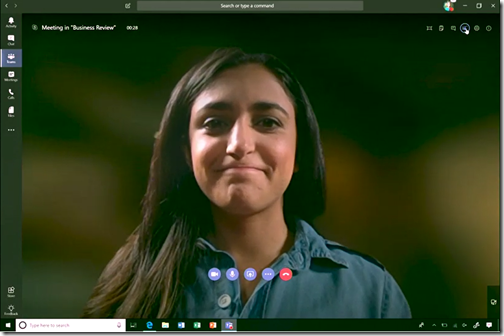

Software is about usability as much or more than features though, and Teams caught on. Users quickly demanded deeper integration between Teams and other parts of Office 365. It soon became obvious that from the user’s perspective there was too much overlap between Teams and Skype for Business, and in September 2017 Microsoft announced that Teams would replace Skype for Business, though this merging of two different tools is not yet complete.

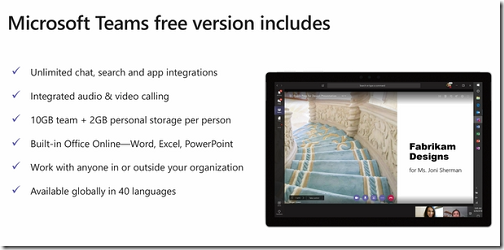

To see why Teams has such potential you need only click Add a tab in the Windows client. Your screen fills with stuff you can add to a Team, from document links to Planner to third-party tools like Trello and Evernote.

This is only going to grow. Users will open Teams at the beginning of the day and live there, which is exactly the point Garner is making in its attention-grabbing title.

A good thing? Well, collaboration is good, and so is making better use of what you are paying for with an Office 365 subscription, so it has merit.

The part that troubles me is that we are losing diversity as well as granting Microsoft a firmer hold on its customers.

It all started with email, remember. But email is a disaster, replete with unwanted marketing, malware links, and some number of communications that have some possible value but which life is too short to investigate. In the consumer world, people prefer the safer world of Facebook Messenger or WhatsApp, where messages are more likely to be wanted. Email is also ancient, hard to extend with new features, and generally insecure.

Business-oriented messaging software like Slack and now Teams have moved in, to give users a safer and more usable way of communicating with colleagues. Consumers prefer Facebook’s walled garden to the internet jungle, and business users are no different.

It is a trade-off though. Email, for all its faults, is open and has multiple providers. Teams is not.

This will not stop Teams from succeeding, even though there are plenty of user requests and considerable dissatisfaction with the current release. Performance can be poor, the clients for Mac and mobile not as good as for Windows, and there is no Linux client at all.

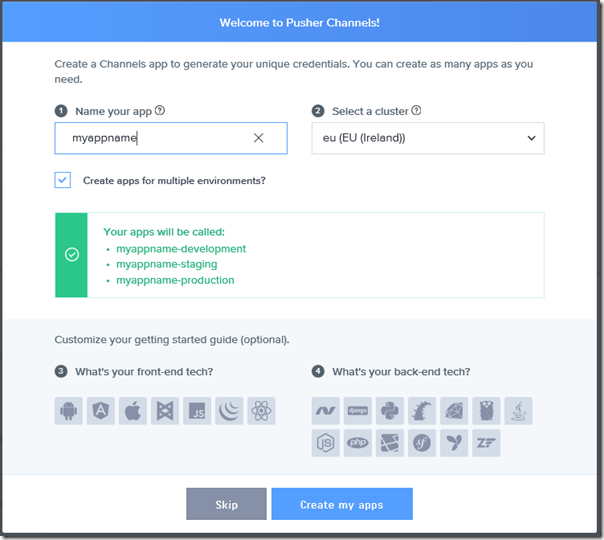

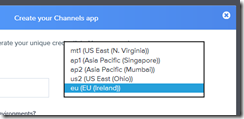

Third-parties with applications or services that make sense in the Teams environment should hasten to get their stuff available there.