People have been talking about “the internet operating system” for years. The phrase may have been muttered in Netscape days in the nineties, when the browser was going to be the operating system; then in the 2000s it was the Google OS that people discussed. Most notably though, Tim O’Reilly reflected on the subject, for example here in 2010 (though as he notes, he had been using the phrase way earlier than that):

Ask yourself for a moment, what is the operating system of a Google or Bing search? What is the operating system of a mobile phone call? What is the operating system of maps and directions on your phone? What is the operating system of a tweet?

On a standalone computer, operating systems like Windows, Mac OS X, and Linux manage the machine’s resources, making it possible for applications to focus on the job they do for the user. But many of the activities that are most important to us today take place in a mysterious space between individual machines.

It is still worth reading, as he teases out what OS components look like in the context of an internet operating system, and notes that there are now several (but only a few) competing internet operating systems, platforms which our smart mobile phones or tablets tap into and to some extent lock us in.

But what on earth (or in the heavens) is Microsoft’s “Cloud OS”? I first heard the term in the context of Server 2012, when it was in preview at the end of 2011. Microsoft seems to like how it sounds, because it is getting another push in the context of System Center 2012 Service Pack 1, just announced. In particular, Michael Park from Server and Tools has posted on the subject:

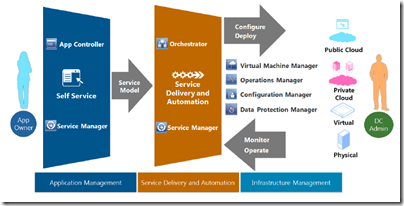

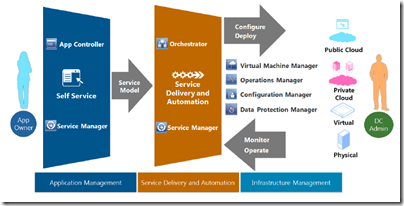

At the highest level, the Cloud OS does what a traditional operating system does – manage applications and hardware – but at the scope and scale of cloud computing. The foundations of the Cloud OS are Windows Server and Windows Azure, complemented by the full breadth of our technology solutions, such as SQL Server, System Center and Visual Studio. Together, these technologies provide one consistent platform for infrastructure, apps and data that can span your datacenter, service provider datacenters, and the Microsoft public cloud.

In one sense, the concept is similar to that discussed by O’Reilly, though in the context of enterprise computing, whereas O’Reilly looks at a bigger picture embracing our personal as well as business lives. Never forget though that this is marketing speak, and Microsoft consciously works to blur together the idealised principles behind cloud computing with its specific set of products: Windows Azure, Window Server, and especially System Center, its server and device management piece.

A nagging voice tells me there is something wrong with this picture. It is this: the cloud is meant to ease the administrative burden by making compute power an abstracted resource, managed by a third party far away in a datacenter in ways that we do not need to know. System Center on the other hand is a complex and not altogether consistent suite of products which imposes a substantial administrative burden on those who install and maintain it. If you have to manage your own cloud, do you get any cloud computing benefit?

The benefit is diluted; but there is plentiful evidence that many businesses are not yet ready or willing to hand over their computer infrastructure to a third-party. While System Center is in one sense the opposite of cloud computing, in another sense it counts because it has the potential to deliver cloud benefits to the rest of the business.

Further confusing matters, there are elements of public cloud in Microsoft’s offering, specifically Windows Azure and Windows Intune. Other bits of Microsoft’s cloud, like Office 365 and Outlook.com, do not count here because that is another department, see. Park does refer to them obliquely:

Running more than 200 cloud services for over 1 billion customers and 20+ million businesses around the world has taught us – and teaches us in real time – what it takes to architect, build and run applications and services at cloud scale.

We take all the learning from those services into the engines of the Cloud OS – our enterprise products and services – which customers and partners can then use to deliver cloud infrastructure and services of their own.

There you have it. The Cloud OS is “our enterprise products and services” which businesses can use to deliver their own cloud services.

What if you want to know in more detail what the Cloud OS is all about? Well, then you have to understand System Center, which is not something that can be explained in a few words. I did have a go at this, in a feature called Inside Microsoft’s private cloud – a glossary of terms, for which the link is currently giving a PHP error, but maybe it will work for you.

It will all soon be a little out of date, since System Center 2012 SP1 has significant new features. If you want a summary of what is actually new, I recommend this post by Mike Schutz on System Center 2012 SP1; and this post also by Schutz on Windows Intune and System Center Configuration Manager SP1.

My even shorter summary:

- All System Center products now updated to run on, and manage, Server 2012

- Upgraded Virtual Machine Manager supports up to 8000 VMs on clusters of up to 64 hosts

- Management support for Hyper-V features introduced in Server 2012 including the virtual network switch

- App Controller integrates with VMs offered by hosting service providers as well as those on Azure and in your own datacenter

- App Controller can migrate VMs to Windows Azure (and maybe back); a nice feature

- New Azure service called Global Service Monitor for monitoring web applications

- Back up servers to Azure with Data Protection Manager

and on the device and client management side, new Intune and Configuration Manager features. It is confusing; Intune is a kind-of cloud based Configuration Manager but has features that are not included in the on-premise Configuration Manager and vice versa. So:

- Intune can now manage devices running Windows RT, Windows Phone 8, Android and iOS

- Intune has a self-service portal for installing business apps

- Configuration Manager integrates with Intune to get supposedly seamless support for additional devices

- Configuration Manager adds support for Windows 8 and Server 2012

- PowerShell control of Configuration Manager

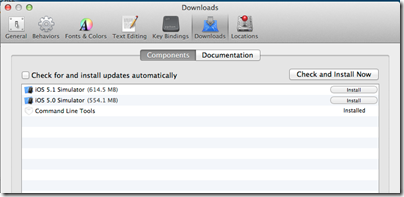

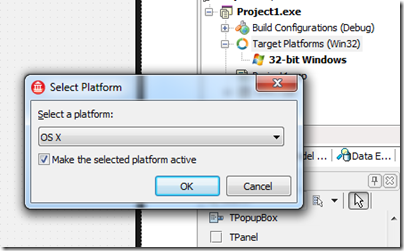

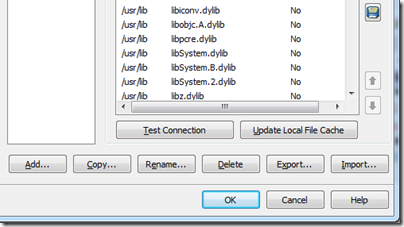

- Ability to manage Mac OS X, Linux and Unix servers in Configuration Manager

What do I think of System Center? On the plus side, all the pieces are in place to manage not only Microsoft servers but a diverse range of servers and a similarly diverse range of clients and devices, presuming the features work as advertised. That is a considerable achievement.

On the negative side, my impression is that Microsoft still has work to do. What would help would be more consistency between the Azure public cloud and the System Center private cloud; a reduction of the number of products in the System Center suite; a consistent user interface across the entire suite; and simplification along the lines of what has been done in the new Azure portal so that these products are easier and more enjoyable to use.

I would add that any business deploying System Center should be thinking carefully about what they still feel they need to manage on-premise, and what can be handed over to public cloud infrastructure, whether Azure or elsewhere. The ability to migrate VMs to Azure could be a key enabler in that respect.